Use dynamic import instead of require to load the incremental cache

handled, so when using ESM it will still work.

Updated the tests and merged them into new test suite, include 3 cases

of custom cache definition:

- CJS with `module.exports`

- CJS with `exports.default` with ESM mark

- ESM with `export default`

Closes NEXT-1924

Fixes#58509

Previously when running deployment tests, the testing infrastructure

used the Vercel REST API to manage and work with deployments to perform

the actual testing. This now utilizes the Vercel CLI instead (while

maintaining the same beheviour as before) to simplifiy the

implementation.

In cases where testing is performed against a locally configured Vercel

CLI that's already authenticated it will now use those pre-configured

credentials.

Closes NEXT-1841

### What?

While scrolled on a page, and when following a link to a new page and

clicking the browser back button or using `router.back()`, the scroll

position would sometimes restore scroll to the incorrect spot (in the

case of the test added in this PR, it'd scroll you back to the top of

the list)

### Why?

The refactor in #56497 changed the way router actions are processed:

specifically, all actions were assumed to be async, even if they could

be handled synchronously. For most actions this is fine, as most are

currently async. However, `ACTION_RESTORE` (triggered when the

`popstate` event occurs) isn't async, and introducing a small amount of

delay in the handling of this action can cause the browser to not

properly restore the scroll position

### How?

This special-cases `ACTION_RESTORE` to synchronously process the action

and call `setState` when it's received, rather than creating a promise.

To consistently reproduce this behavior, I added an option to our

browser interface that'll allow us to programmatically trigger a CPU

slowdown.

h/t to @alvarlagerlof for isolating the offending commit and sharing a

minimal reproduction.

Closes NEXT-1819

Likely addresses #58899 but the reproduction was too complex to verify.

If a build time fetch cache is present from a previous build we don't

want to unexpectedly use it when flush to disk is set to false in a

successive build as it can leverage stale data unexpectedly.

x-ref: [slack

thread](https://vercel.slack.com/archives/C03S8ED1DKM/p1701266754905909)

Closes NEXT-1750

Co-authored-by: Zack Tanner <zacktanner@gmail.com>

We have identical `resetProject` code used in `bench/vercel` and our e2e workflow action -- this updates the `resetProject` script to side-effects free (hence removing the env var) and shared between bench & e2e

Closes NEXT-1731

Doesn't make any functional changes. Going through the current setup for isolated tests to figure out a better way to cache the repository setup, so that we don't have to wait ~30s+ when running tests locally.

BREAKING CHANGE

Since `next export` has been printing a deprecation warning since https://github.com/vercel/next.js/pull/47376, its safe to remove in semver-major.

The upgrade path is to simply add `output: 'export'` in `next.config.js` - everything will continue to work the same.

This config greatly improves the `next dev` experience today. And in the future, it will improve performance of `next build` because we no longer need to do two passes (build then export).

### What?

`globalThis.ReadableStream` and `globalThis.WriteableStream` has been exposed since Node.js 18, which is our new default requirement. (#56943)

### Why?

This simplifies the code and might result in slightly better performance.

### How?

Drop any checks of `globalThis` properties that are always defined now.

This PR adds the optional `limit` parameter on String.prototype.split uses.

> If provided, splits the string at each occurrence of the specified separator, but stops when limit entries have been placed in the array. Any leftover text is not included in the array at all.

[MDN](https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/String/split#syntax)

While the performance gain may not be significant for small texts, it can be huge for large ones.

I made a benchmark on the following repository : https://github.com/Yovach/benchmark-nodejs

On my machine, I get the following results:

`node index.js`

> normal 1: 570.092ms

> normal 50: 2.284s

> normal 100: 3.543s

`node index-optimized.js`

> optmized 1: 644.301ms

> optmized 50: 929.39ms

> optmized 100: 1.020s

The "benchmarks" numbers are :

- "lorem-1" file contains 1 paragraph of "lorem ipsum"

- "lorem-50" file contains 50 paragraphes of "lorem ipsum"

- "lorem-100" file contains 100 paragraphes of "lorem ipsum"

### What?

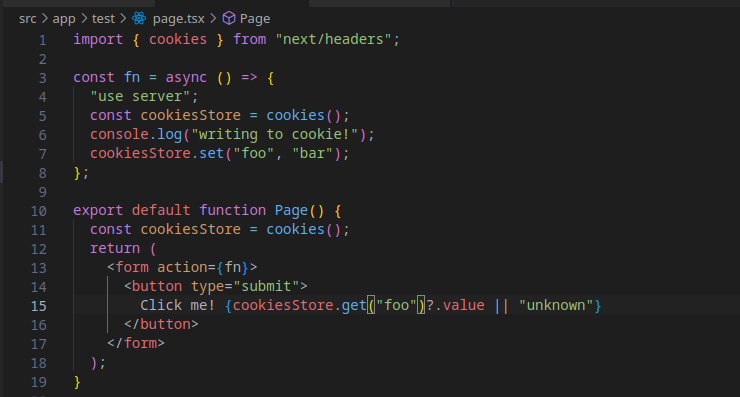

This implements Server Actions inside the new Turbopack next-api bundles.

### How?

Server Actions requires:

1. A `.next/server/server-reference-manifest.json` manifest describing what loader module to import to invoke a server action

2. A "loader" entry point that then imports server actions from our internal chunk items

3. Importing the bundled `react-experimental` module instead of regular `react`

4. A little 🪄 pixie dust

5. A small change in the magic comment generated in modules that export server actions

I had to change the magic `__next_internal_action_entry_do_not_use__` comment generated by the server actions transformer. When I traverse the module graph to find all exported actions _after chunking_ has been performed, we no longer have access to the original file name needed to generate the server action's id hash. Adding the filename to comment allows me to recover this without overcomplicating our output pipeline.

Closes WEB-1279

Depends on https://github.com/vercel/turbo/pull/5705

Co-authored-by: Tim Neutkens <6324199+timneutkens@users.noreply.github.com>

Co-authored-by: Jiachi Liu <4800338+huozhi@users.noreply.github.com>

### What?

fixes

```

[Error: Invalid segment components, catch all segment must be the last segment

Debug info:

- Execution of directory_tree_to_entrypoints_internal failed

- Invalid segment components, catch all segment must be the last segment] {

code: 'GenericFailure'

}

```

Closes WEB-1668

Inferring the protocol from the request meta is not reliable when the next server is running over `http` but sitting behind an https proxy. This instead plumbs the experimental https flag through to the optimizer so we can more reliably determine the protocol

Fixes#55971

### What?

Minor improvement to test fixture to clean up the instance if explicitly specified to continue test. This is required to run turbopack related tests.

Closes WEB-1634

### What?

In some test failures we've been observing test result is not written at

all like

```

Failed to load test output [Error: ENOENT: no such file or directory, open 'test/e2e/app-dir/edge-runtime-node-compatibility/edge-runtime-node-compatibility.test.ts.results.json'] {

errno: -2,

code: 'ENOENT',

syscall: 'open',

path: 'test/e2e/app-dir/edge-runtime-node-compatibility/edge-runtime-node-compatibility.test.ts.results.json'

}

```

scrolling up, it was due to whole test process was taken down by calling

process.exit explicitly.

We want to collect test results for the failures still, so the PR amends

it for the cases if it's specified to continue on error.

Closes WEB-1628

When errors are thrown in middleware it could re-send headers for the same response

```

Error [ERR_HTTP_HEADERS_SENT]: Cannot set headers after they are sent to the client

at __node_internal_captureLargerStackTrace (node:internal/errors:490:5)

at new NodeError (node:internal/errors:399:5)

at ServerResponse.setHeader (node:_http_outgoing:645:11)

at origSetHeader (/next.js/packages/next/src/server/base-server.ts:777:16)

at ServerResponse._res.setHeader (/next.js/packages/next/src/server/base-s

erver.ts:777:16)

at setHeader (/next.js/packages/next/src/server/base-http/node.ts:84:15)

at renderErrorImpl (/next.js/packages/next/src/server/base-server.ts:2790:

11)

at <anonymous> (/next.js/packages/next/src/server/base-server.ts:2777:19)

at trace (/next.js/packages/next/src/server/lib/trace/tracer.ts:213:14)

at DevServer.renderError (/next.js/packages/next/src/server/base-server.ts

:2776:24)

at DevServer.renderError (/next.js/packages/next/src/server/next-server.ts

:1299:18)

at DevServer.handleRequestImpl (/next.js/packages/next/src/server/base-ser

ver.ts:1185:23) {

code: 'ERR_HTTP_HEADERS_SENT'

}

```

Migrate middleware-errors test to e2e test to avoid flaky assertion due to duplicated logging collected in integration test.

Closes NEXT-1629

Some tests may depend on `latest` to help us catch problems earlier. This adds support for the `resolutions` field for tests to allow pinning of deps that may be problematic upstream.

## Logging Improvements

* Delay server start logging

Post start logging after request handler is created, so when error

occurred like missing "app" or "pages" directory it won't log start

server message, instead it will only show error message.

* Fix jsconfig hmr case

Previously the `jsconfig.json` is patched too early that didn't trigger

the hmr reload, this PR enables it to show on the

* Adding timestamp for "ready" event

Display a timesmap after "ready" message, so we can monitor how long

does it take, and keep perfing on it

* Polish logging format

* Starts with captital letter

* align the indentation

### What

Found a flaky test like https://github.com/vercel/next.js/actions/runs/6125719281/job/16628276301?pr=55118#step:29:174, `get-port` throws by port is not available. Peeking bit, there seems an upstream fix hope to improve the situation but unfortunately it happened after get-port switched to native esm only, so bumping is non trivial work. Instead adapting get-port-please as a replacwement but leave get-port as fallback for a while to verify its stability. Once we are certain, we can remove old get-port entirely.

### What?

https://vercel.slack.com/archives/C04KC8A53T7/p1694190462958199

I realized we throws an error attempt to destory against undefined instance when `beforeAll` fails in any reason. This is quite redundant error since if next instance doesn't exist, likely there's an upstream test failures already and destory against undefined error is not useful to debug anyway.

PR replaces `nextTestSetup`'s teardown first, for the remaining places calling `next.destory` explicitly will be amended later.

### What?

Switch the default for `--turbo` to the new `--experimental-turbo`, remove the old code in next.js

### Why?

The new approach will be used in future

Closes WEB-1506

While investigating the HMR event types I noticed `pong` is not being used in Pages Router nor in App Router.

This PR removes the code that sends it as it's essentially dead code.

Follow up to https://github.com/vercel/next.js/pull/54081 -- this was

restoring the router tree improperly causing an error on bfcache hits

Had to override some default behaviors to prevent `forward` / `back` in

playwright from hanging indefinitely since no load event is firing in

these cases

Fixes#54184

Closes NEXT-1528

When an mpa navigation takes place, we currently push the user to the new route and suspend the page indefinitely (x-ref: #49058). When navigating back, if the browser opts into using the [bfcache](https://web.dev/bfcache/), it will remain suspended and `pushRef.mpaNavigation` will be true. This means that anything that would cause the component to re-render will trigger the mpa navigation again (such as hovering over another `Link`, as reported in #53347)

This PR checks to see if bfcache is being used by observing `PageTransitionEvent.persisted` and if so, resets the router state to clear out `pushRef`.

Closes NEXT-1511

Fixes#53347

### What?

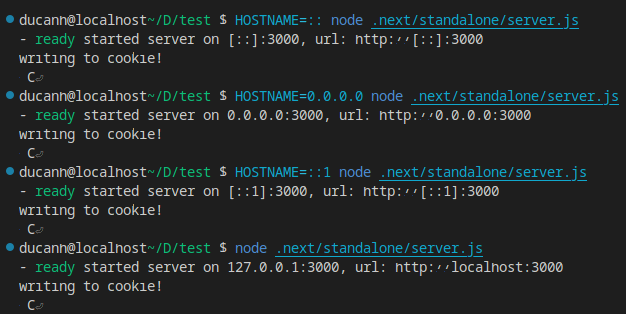

This PR makes it easier to use Next.js with IPv6 hostnames such as `::1` and `::`.

### How?

It does so by removing rewrites from `localhost` to `127.0.0.1` introduced in #52492. It also fixes the issue where Next.js tries to fetch something like `http://::1:3000` when `--hostname` is `::1` as it is not a valid URL (browsers' `URL` class throws an error when constructed with such hosts). It also fixes `NextURL` so that it doesn't accept `http://::1:3000` but refuse `http://[::1]:3000`. It also changes `next/src/server/lib/setup-server-worker.ts` so that it uses the server's `address` method to retrieve the host instead of our provided `opts.hostname`, ensuring that no matter what `opts.hostname` is we will always get the correct one.

### Note

I've verified that `next dev`, `next start` and `node .next/standalone/server.js` work with IPv6 hostnames (such as `::` and `::1`), IPv4 hostnames (such as `127.0.0.1`, `0.0.0.0`) and `localhost` - and with any of these hostnames fetching to `localhost` also works. Server Actions and middleware have no problems as well.

This also removes `.next/standalone/server.js`'s logging as we now use `start-server`'s logging to avoid duplicates. `start-server`'s logging has also been updated to report the actual hostname.

The above pictures also demonstrate using Server Actions with Next.js after this PR.

Fixes#53171Fixes#49578

Closes NEXT-1510

Co-authored-by: Tim Neutkens <6324199+timneutkens@users.noreply.github.com>

Co-authored-by: Zack Tanner <1939140+ztanner@users.noreply.github.com>

## Vendoring

Updates all module resolvers (node, webpack, nft for entrypoints, and nft for next-server) to consider whether vendored packages are suitable for a given resolve request and resolves them in an import semantics preserving way.

### Problem

Prior to the proposed change, vendoring has been accomplished but aliasing module requests from one specifier to a different specifier. For instance if we are using the built-in react packages for a build/runtime we might replace `require('react')` with `require('next/dist/compiled/react')`.

However this aliasing introduces a subtle bug. The React package has an export map that considers the condition `react-server` and when you require/import `'react'` the conditions should be considered and the underlying implementation of react may differ from one environment to another. In particular if you are resolving with the `react-server` condition you will be resolving the `react.shared-subset.js` implementation of React. This aliasing however breaks these semantics because it turns a bare specifier resolution of `react` with path `'.'` into a resolution with bare specifier `next` with path `'/dist/compiled/react'`. Module resolvers consider export maps of the package being imported from but in the case of `next` there is no consideration for the condition `react-server` and this resolution ends up pulling in the `index.js` implementation inside the React package by doing a simple path resolution to that package folder.

To work around this bug there is a prevalence of encoding the "right" resolution into the import itself. We for instance directly alias `react` to `next/dist/compiled/react/react.shared-subset.js` in certain cases. Other times we directly specify the runtime variant for instance `react-server-dom-webpack/server.edge` rather than `react-server-dom-wegbpack/server`, bypassing the export map altogether by selecting the runtime specific variant. However some code is meant to run in more than one runtime, for instance anything that is part of the client bundle which executes on the server during SSR and in the browser. There are workaround like using `require` conditionally or `import(...)` dynamically but these all have consequences for bundling and treeshaking and they still require careful consideration of the environment you are running in and which variant needs to load.

The result is that there is a large amount of manual pinning of aliases and additional complexity in the code and an inability to trust the package to specify the right resolution potentially causing conflicts in future versions as packages are updated.

It should be noted that aliasing is not in and of itself problematic when we are trying to implement a sort of lightweight forking based on build or runtime conditions. We have good examples of this for instance with the `next/head` package which within App Router should export a noop function. The problem is when we are trying to vendor an entire package and have the package behave semantically the same as if you had installed it yourself via node_modules

### Solution

The fix is seemingly straight forward. We need to stop aliasing these module specifiers and instead customize the resolution process to resolve from a location that will contain the desired vendored packages. We can then start simplifying our imports to use top level package resources and generally and let import conditions control the process of providing the right variant in the right context.

It should be said that vendoring is conditional. Currently we only vendor react pacakges for App Router runtimes. The implementation needs to be able to conditionally determine where a package resolves based on whether we're in an App Router context vs a Page Router one.

Additionally the implementation needs to support alternate packages such as supporting the experimental channel for React when using features that require this version.

### Implementation

The first step is to put the vendored packages inside a node_modules folder. This is essential to the correct resolving of packages by most tools that implement module resolution. For packages that are meant to be vendored, meaning whole package substitution we move the from `next/(src|dist)/compiled/...` to `next/(src|dist)/vendored/node_modules`. The purpose of this move is to clarify that vendored packages operate with a different implementation. This initial PR moves the react dependencies for App Router and `client-only` and `server-only` packages into this folder. In the future we can decide which other precompiled dependencies are best implemented as vendored packages and move them over.

It should be noted that because of our use of `JestWorker` we can get warnings for duplicate package names so we modify the vendored pacakges for react adding either `-vendored` or `-experimental-vendored` depending on which release channel the package came from. While this will require us to alter the request string for a module specifier it will still be treating the react package as the bare specifier and thus use the export map as required.

#### module resolvers

The next thing we need to do is have all systems that do module resolution implement an custom module resolution step. There are five different resolvers that need to be considered

##### node runtime

Updated the require-hook to resolve from the vendored directory without rewriting the request string to alter which package is identified in the bare specifier. For react packages we only do this vendoring if the `process.env.__NEXT_PRIVATE_PREBUNDLED_REACT` envvar is set indicating the runtime is server App Router builds. If we need a single node runtime to be able to conditionally resolve to both vendored and non vendored versions we will need to combine this with aliasing and encode whether the request is for the vendored version in the request string. Our current architecture does not require this though so we will just rely on the envvar for now

##### webpack runtime

Removed all aliases configured for react packages. Rely on the node-runtime to properly alias external react dependencies. Add a resolver plugin `NextAppResolverPlugin` to preempt perform resolution from the context of the vendored directory when encountering a vendored eligible package.

##### turbopack runtime

updated the aliasing rules for react packages to resolve from the vendored directory when in an App Router context. This implementation is all essentially config b/c the capability of doing the resolve from any position (i.e. the vendored directory) already exists

##### nft entrypoints runtime

track chunks to trace for App Router separate from Pages Router. For the trace for App Router chunks use a custom resolve hook in nft which performs the resolution from the vendored directory when appropriate.

##### nft next-server runtime

The current implementation for next-server traces both node_modules and vendored version of packages so all versions are included. This is necessary because the next server can run in either context (App vs Page router) and may depend on any possible variants. We could in theory make two traces rather than a combined one but this will require additional downstream changes so for now it is the most conservative thing to do and is correct

Once we have the correct resolution semantics for all resolvers we can start to remove instances targeting our precompiled instances for instance making `import ... from "next/dist/compiled/react-server-dom-webpack/client"` and replacing with `import ... from "react-server-dom-webpack/client"`

We can also stop requiring runtime specific variants like `import ... from "react-server-dom-webpack/client.edge"` replacing it with the generic export `"react-server-dom-webpack/client"`

There are still two special case aliases related to react

1. In profiling mode (browser only) we rewrite `react-dom` to `react-dom/profiling` and `scheduler/tracing` to `scheduler/tracing-profiling`. This can be moved to using export maps and conditions once react publishses updates that implement this on the package side.

2. When resolving `react-dom` on the server we rewrite this to `react-dom/server-rendering-stub`. This is to avoid loading the entire react-dom client bundle on the server when most of it goes unused. In the next major react will update this top level export to only contain the parts that are usable in any runtime and this alias can be dropped entirely

There are two non-react packages currently be vendored that I have maintained but think we ought to discuss the validity of vendoring. The `client-only` and `server-only` packages are vendored so you can use them without having to remember to install them into your project. This is convenient but does perhaps become surprising if you don't realize what is happening. We should consider not doing this but we can make that decision in another discussion/PR.

#### Webpack Layers

One of the things our webpack config implements for App Router is layers which allow us to have separate instances of packages for the server components graph and the client (ssr) graph. The way we were managing layer selection was a but arbitrary so in addition to the other webpack changes the way you cause a file to always end up in a specific layer is to end it with `.serverlayer`, `.clientlayer` or `.sharedlayer`. These act as layer portals so something in the server layer can import `foo.clientlayer` and that module will in fact be bundled in the client layer.

#### Packaging Changes

Most package managers are fine with this resolution redirect however yarn berry (yarn 2+ with PnP) will not resolve packages that are not defined in a package.json as a dependency. This was not a problem with the prior strategy because it was never resolving these vendored packages it was always resolving the next package and then just pointing to a file within it that happened to be from react or a related package.

To get around this issue vendored packages are both committed in src and packed as a tgz file. Then in the next package.json we define these vendored packages as `optionalDependency` pointing to these tarballs. For yarn PnP these packed versions will get used and resolved rather than the locally commited src files. For other package managers the optional dependencies may or may not get installed but the resolution will still resolve to the checked in src files. This isn't a particularly satisfying implemenation and if pnpm were to be updated to have consistent behavior installing from tarballs we could actually move the vendoring entirely to dependencies and simplify our resolvers a fair bit. But this will require an upstream chagne in pnpm and would take time to propogate in the community since many use older versions

#### Upstream Changes

As part of this work I landed some other changes upstream that were necessary. One was to make our packing use `npm` to match our publishing step. This also allows us to pack `node_modules` folders which is normally not supported but is possible if you define the folder in the package.json's files property.

See: #52563

Additionally nft did not provide a way to use the internal resolver if you were going to use the resolve hook so that is now exposed

See: https://github.com/vercel/nft/pull/354

#### additional PR trivia

* When we prepare to make an isolated next install for integration tests we exclude node_modules by default so we have a special case to allow `/vendored/node_modules`

* The webpack module rules were refactored to be a little easier to reason about and while they do work as is it would be better for some of them to be wrapped in a `oneOf` rule however there is a bug in our css loader implementation that causes these oneOf rules to get deleted. We should fix this up in a followup to make the rules a little more robuts.

## Edits

* I removed `.sharedlayer` since this concept is leaky (not really related to the client/server boundary split) and it is getting refactored anyway soon into a precompiled runtime.